- PagerDuty /

- Blog /

- Partnerships /

- A Duty To Alert

Blog

A Duty To Alert

Guest blog by Tim Yocum, Ops Director at Compose. Compose is a database service providing production-ready, auto-scaling MongoDB and Elasticsearch databases.

Compose users trust our team to take care of their data and their databases with our intelligent infrastructure and smart people ready to respond to alerts 24/7 to take care of any problems. That’s part of the Compose promise. But we think there’s a lesson to be learned by our users from how we handle those alerts.

Growth creates complexity

A typical Compose customer has two major parts to their stack, their data storage and their application. While most data storage incidents and alerts are handled by us, we find there’s often a good chance that there’s no mechanism for generating and handling alerts in the application. It’s not by design; early on, unfortunately their own customers are the ones letting them know that their system isn’t working and they’ll have to organically scale to handle those complaints, translate them into issues with your application, triage the problems and resolve the issue.

But as you scale up your systems and company, that organic scaling puts a load on your people and will affect response times and the quality of triage. Your application’s architecture will become more complex with more moving parts and more subsystems. It is at this point we often find we get calls about database problems which turn out to be further up the customer’s stack, up in the application.

Smoke alarms

There may already be instrumentation or monitoring in some of your system components so that you get a number of alerts from your systems. By adding more instrumentation you can cover the blind spots. Other systems can also provide performance metrics and regular checks. By adding all these to the equation you can provide as much alert visibility and sensitivity as possible.

But then you realize that what you’ve created is more alerts for your people handle. There’ll be a lot of noise because you’ll typically find that any single failure has a ripple effect in creating alerts in different systems. Some of the failures will be like smoke alarms, ringing loudly with no obvious cause, while others will only generate one alert yet have a massive impact. On top of all of that these alerts you may be receiving these alerts from multiple monitoring systems which are tied to different people. You may be tempted to build your own alert management system… but that’s another component to monitor and you’ll be spending more time engineering system monitoring than growing your business.

Answering alerts at Compose

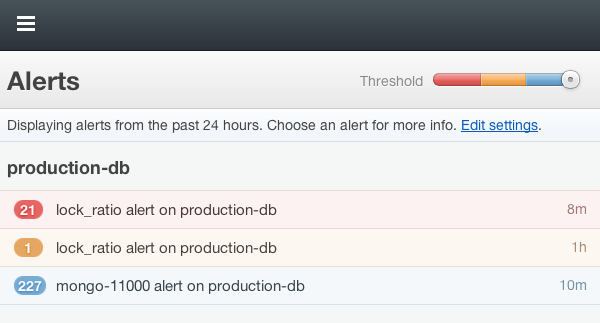

At Compose, our business is delivering databases issues to our customers which is why we use PagerDuty. Our older Nagios and newer Sensu server monitoring systems both integrate with PagerDuty to report on the overall state of the servers. We then use our own Compose plugin to monitor our production systems for high lock and stepdown events turning them into alerts too. We have a premium support offering and we use PagerDuty to ensure rapid responses to it’s 911 contact points. Our 911 support email are picked up by PagerDuty’s email hooks while the 911 calls pass through Twilio into PagerDuty turning those calls into alerts too.

With the alerts collected, collated and deduplicated at PagerDuty, we then use its rotation management to handle two simultaneous overlapping rotations of support staff. The lead rotation is the primary contact and the second is a backup contact. The scheduling overlaps and where there’s jobs which are best done by two people, we can bring the primary and secondary in on the job.

We then add to that mix the ops team to act as an extra backup. Finally, ensure that scheduled maintenance doesn’t unnecessarily alert the people on call – no one likes being woken up or disturbed at dinner only to find out everything is fine. We have scripts we invoke through hubot that take hosts and services down and ensure that alerts from those systems are picked up in Nagios and Sensu and not forwarded on to PagerDuty.

PagerDuty has become an indispensible part of Compose operations. We used to rely on manually checking multiple systems and using a calendar to work out the on-call rotation. Now, we are more effective, not just at getting alerts in a timely fashion, but also internally it helps up spread the load of delivering high quality support. We wouldn’t be without it now. – Ben Wyrosdick, co-founder of Compose