- PagerDuty /

- Blog /

- Reliability /

- A Standard Operating Procedure for when s*IT hits the fan

Blog

A Standard Operating Procedure for when s*IT hits the fan

This is the third in a series of posts on increasing overall availability of your service or system.

In the first post of this series, we defined and introduced some concepts of system availability, including mean time between failure – MTBF – and mean time to recovery – MTTR. In our second post we went on to discuss easy ways in which you can effectively reduce MTTR starting now. This post continues on that theme with more tips on reducing MTTR and directly increasing your availability.

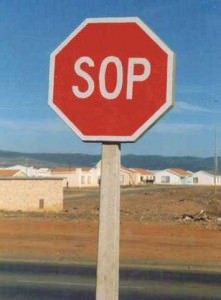

Have an S.O.P

For the really bad problems, have a Standard Operating Procedure that everyone knows how to follow. The SOP should be a set of steps that can be taken to make it easier to work on the problem by increasing communication and organization. This is different than the documented failure modes in the “know your enemy” section in my last post, in that an SOP is a generic procedure that can be used in pretty much any major failure scenario.

For the really bad problems, have a Standard Operating Procedure that everyone knows how to follow. The SOP should be a set of steps that can be taken to make it easier to work on the problem by increasing communication and organization. This is different than the documented failure modes in the “know your enemy” section in my last post, in that an SOP is a generic procedure that can be used in pretty much any major failure scenario.

The SOP would be something your on-call primary engineer can bust out if some major catastrophe happens that he or she can’t immediately resolve within a designated timeframe – like say 10 minutes.

An example SOP might be for your on-call to:

- Start a conference call and invite others on the team. This ensures that everyone working on the problem has a quick and easy communications link, and that you don’t step on each others’ toes. You could use a dedicated conference bridge phone line, or something as simple as Skype. Just have this voice channel organized beforehand, so you don’t have to figure out everyone’s skypeID, etc, when really pressed for time.

- Make sure there is a designated call leader or “Incident Commander” – a seasoned on-call veteran who doesn’t necessarily know the broken system in question but knows how to direct others in debugging and resolution tasks. This call leader should keep everyone on track, make sure balls don’t get dropped, and resolve disputes if they come up.

- Have a set of diagnostics that the on-call primary can start on ASAP while the call is being setup and people are joining. These diagnostics can be things like monitoring data, relevant graphs, related problems in other systems, etc, and will be immediately useful when the conference call begins.

- Have a designated chat system prepared for sharing data, links, code snippets, or whatever needs to be shared non-verbally. Some ticketing systems are good for this as well. Here at PagerDuty we use HipChat.

- If you’re part of a large organization, have a designated persion who communicates with business stakeholders, such as upper management. These stakeholders will be understandably (very) interested in your fixing the problem as fast as possible, but will likely be disruptive if they join the conference call directly. Your VP probably doesn’t know the bowels and intricacies of your messaging layer or caching system, but can definitely intimidate the already-stressed engineers in a conference call. These people can, however, be very useful if major decisions need to be made that might disrupt other facets of the business (say by causing you to temporarily lose orders/requests/money/face/whatever) in order to help fix the problem at hand, so communication with them is important.

Having an SOP for really high-severity issues reduces the variability of response and resolution times, and gets everyone up to speed quickly, including business decision makers. It also reduces stress, uncertainty, and confusion when there is a pretty clear procedure to follow when starting to deal with large issues.