- PagerDuty /

- Blog /

- Distributed Systems /

- Building Scalable Distributed Systems

Blog

Building Scalable Distributed Systems

Prevention is the best medicine

The best way to build a distributed system is to avoid doing it. The reason is simple — you can bypass the fallacies of distributed computing (most of which, contrary to some optimists, still hold) and work with the fast bits of a computer.

The best way to build a distributed system is to avoid doing it. The reason is simple — you can bypass the fallacies of distributed computing (most of which, contrary to some optimists, still hold) and work with the fast bits of a computer.

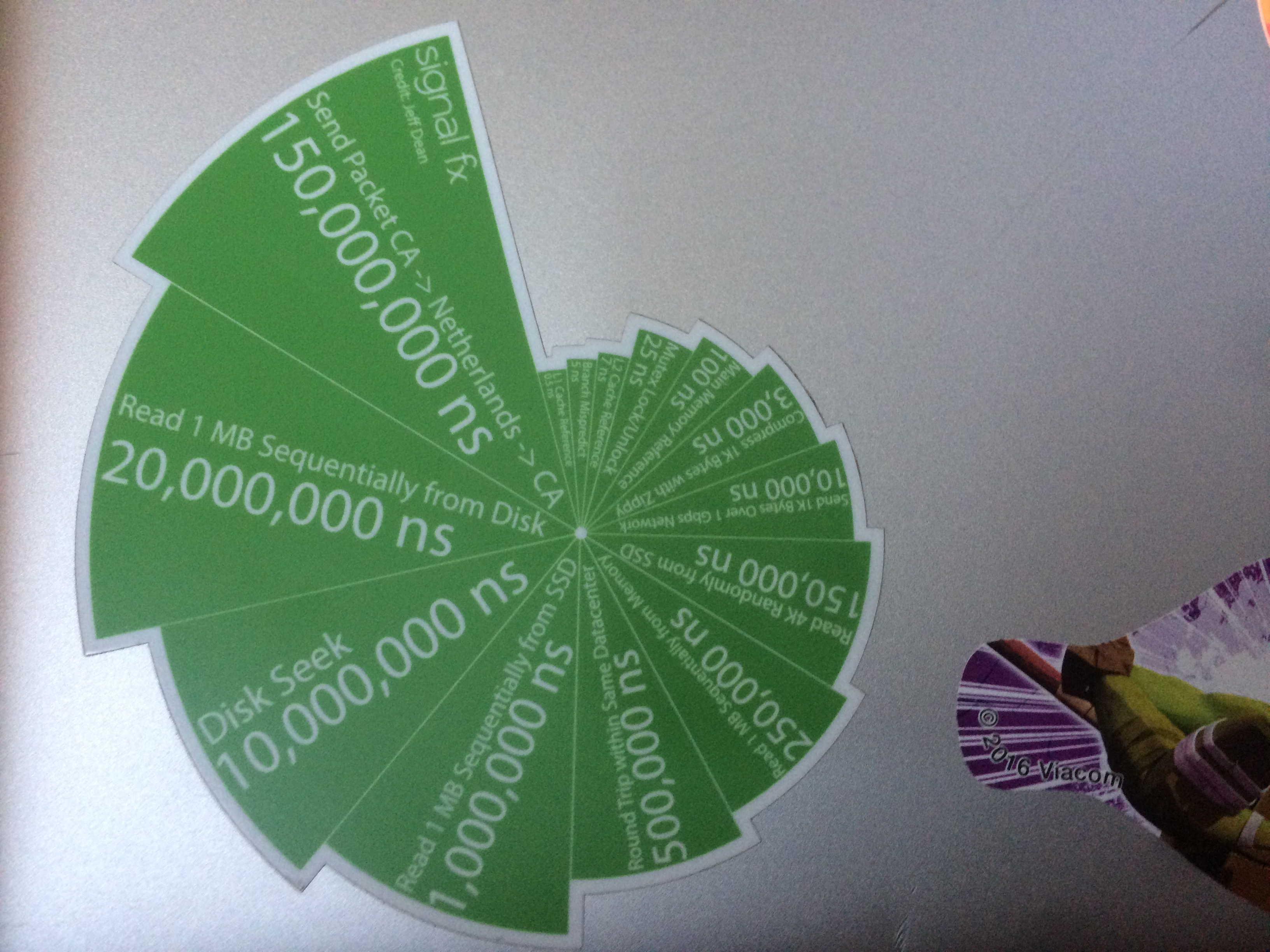

My personal laptop has a nice sticker by SignalFX; it’s a list of speeds of various transport mechanisms. Basically the sticker says to avoid disks and networks, especially when you go between datacenters. If you do that, and employ some mechanical sympathy, you can build awesome stuff like the LMAX disruptor that can support a trading platform that can execute millions of transactions per second on a single node. Keep stuff in memory and on a single machine and you can go a long way; if you’re OK with maybe redoing 15 seconds worth of work on a failure then you can do all work in memory and only write out checkpoints to disk four times a minute. Systems like that will run extremely fast and you can sidestep the question of scaling out completely.

Don’t let yourself be fooled — distributed systems always add complexity and remove productivity. If people tell you otherwise, they are probably selling you snake oil.

Know why you’re doing it — and challenge all assumptions

There’s this requirement called “highly available” which makes it unfeasible to put all your code on one node. This requirement often triggers the very expensive step up to having multiple systems involved. There are two things to do here: challenge assumptions, and challenge requirements. Does this particular system really need to have 5 nines of availability or can we move it to a more relaxed availability tier? Especially if your software still needs to prove itself, going for HA and other bells and whistles may very well be premature optimization. Instead, skip it for now, get to market faster, and have a strategy in place to add it later on. If business stakeholders assert that yes, it needs to be “HA”, explain the trade-offs and make sure that they know they’re about to invest time and money into something they may never have a use for (the expected outcome should be that customers won’t like the product or feature. If you only build products or features that you know customers are going to like, you’re not taking any risks and your venture will end in a boring cloud of mediocrity).

Explaining the CAP theorem, tell your stakeholders they can have availability or consistency, but not both (again, some optimists say that this is not the case anymore, but I think that’s wrong). For example, if you build a system that delivers, say, notifications, they can get a system that delivers a notification exactly once most of the time (consistent, but less available) or a system that delivers a notification at least once almost always (available, but less consistent). Usually, eventually consistent (AP) systems need less coordination so they are simpler to build and easier to scale and operate. Try to see whether you can get away with it, as it’s usually worth the exercise of redefining your problem into an AP solution.

Remember — if you can’t avoid it, at least negotiate it down towards something simple. Not having to implement a complex distributed system is the best way to have a distributed system.

Make your life simple

Complexity is the enemy of our trade, so whatever system you’re designing or code you’re writing, you need to play this game of whack-a-mole where complexity pops up and you hammer it right back into the ground. This becomes even more important as soon as you write software that spans more than one system — distributed systems are intrinsically complex, so you should have no patience with accidental complexity. Some things in distributed systems are simpler to implement than others — try to stick with the simple stuff.

Distributing for HA

There are several ways to increase availability — you can have a cluster of nodes and coordinate everything (save work state all the time so any node can pick up anything), but that requires a lot of coordination. Coordination makes stuff brittle, so maybe you can not have it? There are various options to avoid coordination and still have good availability:

- Run the same work in parallel but use the output of only one system. Everything is replicated on a secondary node so when the primary node fails, the replication ensures the backup node is “hot” and can take over in a blink. Coordination is then just deciding which node runs first and which node is the secondary backup.

- Have a spare standby. The primary node regularly persists work on some shared storage and if it stops working, the secondary reads that and takes over. Coordination here is usually the secondary keeping an eye on the primary to see whether a takeover is needed.

In both cases, coordination moves from “per transaction” to “per configuration”. Distributed work transactions are hard, so if you can get away with configuration-level coordination, do so. Often, this involves replaying some work — an “exactly once” work process becomes “almost always exactly once unless a machine dies and then we replay the last minute to make sure we don’t miss anything.” Modelling operations to be idempotent helps; sometimes, there’s no avoiding duplicate operations becoming visible and you need to chat with the stakeholders about requirements. Get an honest risk assessment (how often per year do machines just die on you?), an honest impact assessment (how much stuff will be done twice and how will this inconvenience users), and an honest difficulty assessment (extra work, more complexity which begets more brittleness which results in less availability).

Sometimes you need availability even when datacenters fail. Be extra careful in that case, because things will become extra brittle extra quick, and you’ll want to make sure to only require a minimal amount of coordination.

Distributing for performance

Sometimes you can’t just get all the work done in a single node. First, try not to be in that position. Open up the hood and see where you are wasting cycles — these pesky LMAX people showed you can do 7-figure transactions per second on a single machine; it might be to go to Amazon for the bigger instance. By now, I would expect decent software to be multi-core capable so you can indeed get a quick fix by getting beefier hardware. Moreover, if you cannot organize your code to run faster with more cores, you probably have no chance to make it faster by adding more nodes, do you? Even without LMAX-level engineering, I think it is reasonable to expect your software to handle at least low 5-digit business operations per second. If you want to scale out because one node can’t handle a couple of hundred of them per second, you maybe want to go back to the drawing board first. Most likely, you probably have some issues in your code that need to be addressed.

When you have to add more machines to crack the problem (this is a great problem to have!), plan it so that coordination is minimal.

- Use configuration coordination over transaction coordination; have your nodes use some coordination scheme to divide up the work and then make them run the process their own chunks without the need for further coordination. You can add an HA aspect here quite simply by having the nodes redistribute work when one becomes unavailable.

- Try to find the embarrassingly parallel bits of work so you don’t need any coordination at all. Stateless web servers come to mind here as a good example, but it’s not the only place where you can just throw an uncoordinated bunch of nodes at a problem.

Storage is cheap, use it

Architectural patterns like Command/Query separation and Event Sourcing decouple and often duplicate data storage into multiple specialized stages. These specialized stages work well to support distributed designs, as you can choose what to keep local and what to distribute so you come up with a hybrid solution that minimizes coordination. For example, you can write update commands to a distributed Kafka cluster, but have everything downstream from there operate local and separate (e.g. consumers process the update commands and independently update ElasticSearch nodes that are used for querying). The “real” data is highly available and coordinated in message streams — systems just use views of that data for specialized processing like search, analytics, and so on. Such a system is much easier to maintain than the classical configuration where a central database system is the nexus of all the operations and inevitably becomes the bottleneck — whether the database system was built for scalability or not.

Feel free to store data redundantly and have multiple independent systems each use their own optimalized form of the data. It takes less coordination and eventually pays for the relatively small increase in storage cost.

Shed the NIH syndrome — your wheel already got reinvented elsewhere

Unless you operate at the scale of Google, the system you’re about to take into the realm of distributed systems is not so special that you have to build it from scratch. It’s quite likely that you’re paid to solve business problems, not to build tools and infrastructure, so there’s zero reason to figure stuff out for yourself in 2017. Implementing a distributed system correctly is hard, so you will likely get it wrong (the same advice holds for persistence and cryptography, by the way). If you think you have a unique problem and need to roll your own, you either haven’t looked hard enough or you haven’t tried hard enough to shape your problem in a format that makes using any of the hundreds of open source projects out there a possibility. You’ve been pushing “the business” to help shape the requirements in a form that makes a distributed solution much simpler (and thus reliable). Now, push yourself to find the correct software out there that will solve the non-unique parts of your problem so you can focus on what makes your company special.

Yes, tool-smithing is fun — I love it and I could do it all day long. And indeed, framing your problem in a form that makes you look like a unique snowflake is good for your self-esteem. Ditch it and go on solving some real problems, the sort that makes your business successful.