- PagerDuty /

- Blog /

- Reliability /

- Chef at PagerDuty

Blog

Chef at PagerDuty

This is the first post of a multi-part series on some of the operations challenges that the team at PagerDuty is solving.

This is the first post of a multi-part series on some of the operations challenges that the team at PagerDuty is solving.

At PagerDuty we strive for high availability at every layer of our stack. We attain this by writing resilient software that then runs on resilient infrastructure. We take this into account when we design our infrastructure automation. We assume that pieces will fail and that we need to either replace or rebuild pieces quickly.

For this first post about our Operations Engineering team, we will be covering how we automate our infrastructure using Chef, a highly extensible, ruby based, search driven configuration management tool, and what practices we have learned. We will cover what our typical workflow is and how we ensure that we can safely roll out new resilient and predictable infrastructure.

The Team

Before going diving into the technical details, first, some context about the team behind the magic. Our Operations Engineering team at PagerDuty is currently made up of 4 engineers. The team is responsible for a few areas: infrastructure automation, host-level security, persistence/data stores, and productivity tools. The team is made up of generalists with each team member having 1-2 areas of depth. While the Operations Engineering team has it’s own PagerDuty on-call rotation, each engineering team at PagerDuty also participates in on-call.

The Hardware

We currently own 150+ servers spanning multiple cloud providers. The servers are split into multiple environments (Staging, Load Test, and Production) and multiple services (app servers, persistence servers, load balancers, and mail servers). Each of our three environments have a dedicated chef server to prevent hosts from polluting other environments.

The Workflow

The chef code base is 3 year old and has around 3.5k commits.

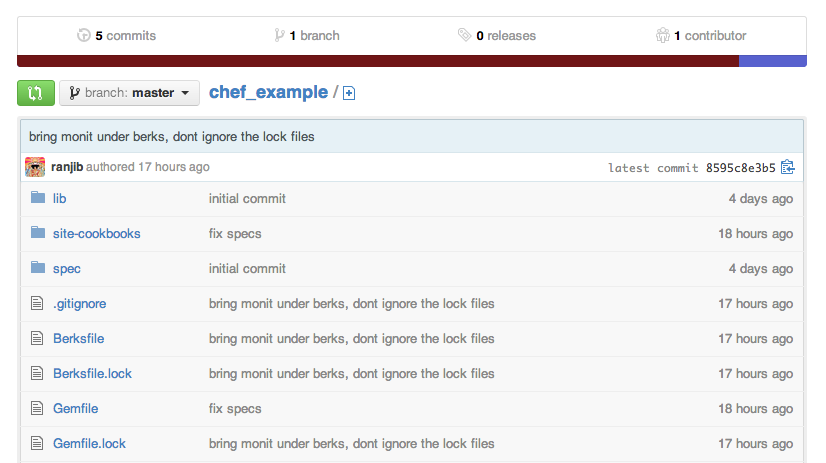

Following is the skeleton of our chef repository:

- git repo

- cookbooks (stores community cookbooks that contain our customizations)

- site-coobooks (stores our wrappers around community cookbooks, our custom cookbooks, lwrps etc)

- data_bags (stores all data bags that are not encrypted)

- lib (ruby libraries that are used across site-cookbook/* and knife plugins)

- roles (stores all roles)

We use the standard feature branch workflow for our repo. A feature can be tactical work (spawning a new type of service), maintenance work (upgrading/patching), or strategic work (infrastructure improvements, large scale refactoring, etc). Feature branches are unit tested via Jenkins which is constantly watching Github for new changes. We then use the staging environment for integration testing. Feature branches that pass the tests are then deployed to the staging environment’s chef server. It depends on the feature, but most branches will go through a code review via a pull request. The code review is purposefully manual where we make sure that at least one other team member gives a +1 on the code. If there is a larger debate on the code, we block out time during our team meetings to discuss it. From there, the feature branch is merged and we invoke our restore script to delete all existing cookbooks from the chef server, upload all roles, environments, and cookbooks from master. Generally the restore process takes less than a minute. We do not follow any strict deployment schedules, we prefer to deploy whenever we can. Unless its a hot-fix, we prefer to do deployments during office hours when everyone is awake. We run chef-client throughout the week once a day via cron. If we need on demand chef execution, we use pssh or knife ssh with a controlled concurrency level.

Chef Testing

All PagerDuty custom cookbooks have a spec directory which contains ChefSpec based tests and we recently migrated to ChefSpec 3. We use Chefspec and Rspec stubbing capabilities extensively as the vast majority of our custom recipes uses search, encrypted data bags etc. Apart from cookbook specific unit tests that reside inside the spec sub directory of individual cookbooks, we have a top level spec directory, which has functional and unit tests. Unit tests are mostly ChefSpec-based role or environments assertions, while functional tests are all lxc and Rspec based assertions. The functional test suite uses chef zero to create an in-memory server, then uses restore script and chef restore knife plugin to emulate a staging or production server. Then we spawn individual lxc per role using the same bootstrap process as our production servers. Once we successfully converge a node, we assert based on the role. For example a zookeeper functional spec will telnet locally and run ‘stats’ to see if requests can be served. This covers most of our code base, except the integration with individual cloud providers.

Cookbook Management

We heavily use community cookbooks. We try not to create cookbooks if there is a well maintained open source alternative. We prefer to write wrapper cookbooks with a “pd” prefix which addresses our customization over the community cookbooks. An example would be pd-memcached cookbook which wraps the memcached community cookbooks, and provides iptables and other PagerDuty specific customization.

Both community cookbooks as well as our PagerDuty custom cookbooks are managed by Berkshelf. All custom cookbooks (pd-* ) stay inside the site-cookbooks directory in chef repo. We use use several custom knife plugins. Two of them, chef restore and chef backup, take care of fully backing up and restoring our chef server (nodes, clients, data bags). With this, we can easily move chef servers from host to host. Other knife plugins are used to spawn servers, perform tear downs and check status of third party services.

Gaining Confidence via Testing and Predictability

Currently, we are confident about our ability to spawn and safely teardown our infrastructure when we have the appropriate tests in place. When we initially took a TDD approach for our infrastructure, there was a steep learning curve for the team. We still run into issues when we are spinning nodes across multiple providers and network dependencies for external configurations (e.g. hosted monitoring services, log managements services), so we have introduced additional failure modes and security requirements. We have responded to these challenges by adopting aggressive memoization techniques, introducing security testing automation tools (e.g. gauntlt) in the operations toolkit (more on this in a later post).

A key challenge remains with cross component versioning issues, and upfront and proactive effort to update dependencies. Some code quality related issues from community cookbooks also hampered us. But we understand these are complex, time bound problems. We are part of the bigger community responsible for fixing them.