- PagerDuty /

- Blog /

- Reliability /

- Not breaking your Google Analytics (like a pro)

Blog

Not breaking your Google Analytics (like a pro)

As a general rule, whatever percentage you think your test coverage is, it isn’t. Whatever amount of the known surface area you’re covering, there’s going to be an exciting swath of things you didn’t realize that you need to test. Analytics fell into that bucket for us.

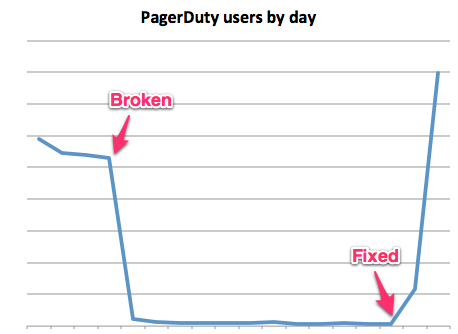

We use Google Analytics in our webapp to get a feel for how users use the product, most recently to determine which functionality was prioritized for the mobile site. So generally I look at our analytics every week or two to help developers out, and when Simon asked me to see how popular the mobile site was, I was pretty sure the answer was not “It decreased the use of our webapp by 98%.”:

I won’t name names, but the culprit rhymes with “itwasian”.

What broke

Our UI consists almost entirely of HAML powered by backbone.js, often at the same time. Which meant that we refactored the default Google Analytics code:

:javascript

var gaJsHost = (("https:" == document.location.protocol) ? "https://ssl." : "http://www.");

document.write(unescape("%3Cscript src='" + gaJsHost + "google-analytics.com/ga.js' type='text/javascript'%3E%3C/script%3E"));

:javascript //

You’ll notice that this generates two JavaScript blocks, which we helpfully merged into one.

That’s what broke everything – and by everything, I’m excluding some mobile and rare browsers that still executed the code as intended. For 98% of our visitors, the fact that we merged those two script blocks means that the DOM does not get control after the document.write and the loading of ga.js doesn’t happen before _gat is referenced. _gat doesn’t exist and that’s the end of our analytics on this page.

The simple fix is, of course, to put the second script block back in. But instead we moved to the newest asynchronous Google Analytics code, which doesn’t need 2 blocks, since it only requires _gaq to be a JavaScript object, with the rest of the functionality coming later, whenever the browser gets around to it.

:javascript

var _gaq = _gaq || [];

_gaq.push(['_setAccount', 'UA-8759953-1']);

_gaq.push(['_setDomainName', 'pagerduty.com']);

_gaq.push(['_trackPageview']);

(function() {

var ga = document.createElement('script'); ga.type = 'text/javascript'; ga.async = true;

ga.src = ('https:' == document.location.protocol ? 'https://ssl' : 'http://www') + '.google-analytics.com/ga.js';

var s = document.getElementsByTagName('script')[0]; s.parentNode.insertBefore(ga, s);

})();

Testing that Google Analytics is working

To ensure I catch this sooner in the future, I’ve set up some intelligence events on our application and the website inside of Google Analytics to detect if we have abnormally low or high amounts of visitors.

There’s at least a day’s delay before the email gets sent out, which is a shame (I received the last one while writing this) but it’s another layer in our web of alerting. Log into your Google Analytics account, and you’ll see “Intelligence Events”:

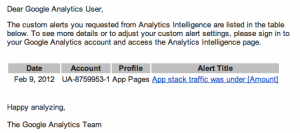

I intend to set up some more advanced heuristics later, but for now let’s just test that analytics is working:

That day of lag had the advantage for testing that it sent me yesterday’s alert, when our analytics were still broken (but I’m really stretching to call that an advantage).

Tying it all in to PagerDuty

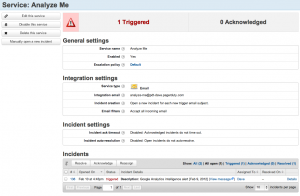

This isn’t the kind of alert I want to be woken up in the middle of the night for, but I still use PagerDuty as an incident management system for my analytics alerts, partially for the dogfooding, but also to track our media mentions, twitter mentions etc.

For this I’ve set up a service that doesn’t auto-resolve or expire acknowledgements, to track everything that emails “analyze-me@pdt-dave.pagerduty.com”

Test all the things

So now I’m filling up our Fogbugz with new things to test:

- Our t-shirt mailings

- whether we respond to customer inquires quickly enough

- testing our load times across the website, app stack, blog and the support site (again with automated alerts from Google). We already test this with New Relic.

I don’t have a good procedure for determining what we’re forgetting to test, but I do have a couple of principles:

- If it fails once, it gets tested forever. I’m kind of expecting this to get lost in the shuffle, but apparently we never sent t-shirts to one or two people that I promised them to, so that’s been automated and I now have an adhoc report of who has and hasn’t received their shirts. When push comes to crazy, I may integrate it with USPS tracking.

- It needs to be automatic, ideally sending you an alert when some measurement is out of band. We track our time to resolution with Zendesk, so one of my projects is to automate our metrics with automations

- Be a jerk. I’m testing other people’s work and trying to get it to fail. I don’t care what the reliability team’s metrics say, if my page load time spikes, I’m going to demand some answers.

We’re still a young company, but we’re fiercely dedicated to uptime and when you’re dealing with bugs, there are known unknowns and unknown unknowns – and when I started here I never would’ve known how much I’d enjoy shooting Nerf guns across the office whenever the average page load time increase.

(With any luck, this will be the first post in a series on what happens when you make a mathematician do your marketing.)