- PagerDuty /

- Engineering Blog /

- What is Software Understandability?

Engineering Blog

What is Software Understandability?

Liran Haimovitch is the co-founder and CTO of Rookout, a modern software debugging platform.

Back in my early days at Rookout, I had the privilege of working with a large and well-known enterprise. They were heavily data-driven and had even developed a custom NOC solution. As it turned out, one of their engineers could not log on to that application. No matter what he did, he ended up getting an obscure error message from Google. The team responsible for that application had chased that bug for over six months. They scanned through the authentication flow dozens of times and still had no idea how could such a thing happen.

That’s the bane of modern software engineering. Software applications in general, and cloud-native applications in particular, are becoming sprawling and complicated affairs. Services, both first- and third-party, are becoming more interdependent. Customization options are growing out of control. The internet is hell-bent on throwing the most bizarre and unexpected inputs our way. And so, with engineering turnover, we find more and more teams failing to understand the software they are responsible for developing and maintaining.

Defining Understandability

To tackle that issue, we need to start by giving it a name, understandability. Drawing inspiration from the financial industry, we define understandability as:

“Understandability is the concept that a system should be presented so that an engineer can easily comprehend it.”

When a system is understandable, engineering operations become a straightforward process. Every time you and your team face a task, you just know what you need to do. It doesn’t matter if it’s developing new features, tackling customer issues, or updating the system configuration. When you comprehend the software, you know how to execute in a consistent, reliable manner, without unnecessary back and forth.

What Happens During Incident Response?

When your phone is going off in the middle of the night because something is wrong, your understanding of the application is vital. First of all, you have to use the information you have to verify this is an actual service disruption. Second, you need to triage the incident, understand the interruption’s impact, and identify its general area. Last, you either workaround it yourself or escalate it appropriately.

If you have a clear mental image of the application in your mind, and high-quality data is available to you, you can expect to perform reasonably well in all of those tasks. You’ll be able to make faster and better decisions in every situation and are much more likely to be able to resolve it yourself. If you have a poor understanding of the application, you won’t likely perform as well.

When it comes to on-call rotations, poor understandability will have a significant impact on teamwork. In some services, new engineers join the on-call rotation quickly, while in others, it takes them a long time to acquire the necessary level of knowledge and confidence. But even more critical (as we all love our bedtime), how many engineers does it take to figure out and fix a single service disruption?

How Does it Impact Bug Resolution?

Understandability also plays a massive role in resolving bugs. We all had that eureka moment where a colleague approached us to report a bug, and even before we finished the sentence, we knew what was wrong and in which line of code should fix it. Unfortunately, in complex software environments, more often than not, that’s not the case.

Today, when facing a bug, you have to take into account:

- Source code. More specifically, the version(s) deployed at the time of the bug occurring.

- Configuration and state. Includes everything from the number of servers we are running through custom settings defined for the user to previous application actions.

- Runtime environment. This includes the operating system, container runtime, language runtime, multi-threading model, databases in use, and so much more.

- Service dependencies. Everything our service relies on, whether first- or third-party, may be impacting the behavior.

- Inputs. Potentially the most crucial part, what exactly were the inputs that resulted in the issue?

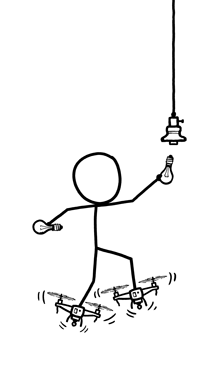

The more you understand the application you are responsible for, the easier it is to put the pieces together. The easier it is to collect the information you need and the less information you will need to see the bigger picture. The less you understand, the more you will find yourself relying on other people, struggling to collect more information. If all else fails, you just may find yourself pushing log lines to production to try and figure out what is going on.

What Can You Do About It?

If you think through the past few months, you will likely notice both positive and negative examples of understandability. In some cases, you were knowledgeable about the software and quickly and efficiently carried out tasks. Things might have been more challenging in other places, with jobs requiring help from other engineers and sometimes even requiring some back and forth to get it right.

Fortunately, when it comes to software understandability, there’s always room for improvement. There are several tried and true approaches to get you there:

- Minimizing complexity will create a higher-quality, more comfortable environment to comprehend software.

- Carefully curating knowledge will make for a gentle learning curve and resources to patch over information gaps.

- Building high-quality development environments will enable engineers to experiment with the application with real-world input and configuration examples.

- Deploying observability tooling will provide engineers with some feedback about the application’s behavior.

- Adopting traditional and next-generation debuggers will show engineers to empower engineers to dive into their code as it’s running.

Summary

Software understandability is a crucial factor in software engineering, measuring how easy it is to comprehend applications. Understandability is empowering your everyday efforts, and poor understanding is painfully apparent, especially when it comes to incident response and bug resolution. We have the essential techniques to improve understandability, and you can read more about it here.

What about the state of your software (and systems)? Are they mostly easily understandable or have you been struggling? We’d love to talk to you and help where needed, if you’d like to commiserate or ask questions. Please feel free to engage with either directly Liran on Twitter (@Liran_Last) or with us on PagerDuty’s community forum! You can also listen to the Page It to the Limit podcast that accompanies this blog post, here.

Wait! What Was the Bug?

That NOC application used Google’s social login for authentication. They had inadvertently passed a flag requesting Google to include the full user profile within the login token. Later in the code, they had a safety check, trimming the token above a certain length and rendering it invalid. As the application validated the token, it responded to validation failures by sending the user’s browser to re-authenticate.

That engineer had a large user profile within their GSuite account. That led to an enormous token, resulting in too many authentication attempts, and finally, that infamous error message. Within that safety check, there was a TODO comment to add a logline for this abnormal situation. Nothing is more painful than the logline that should have been there but wasn’t.